Type Parameters: T - the type of elements returned by the iterator All Known Subinterfaces: BeanContext, BeanContextServices, BlockingDeque, BlockingQueue, Collection, Deque, DirectoryStream, List, NavigableSet, Path, Queue, SecureDirectoryStream, Set, SortedSet, TransferQueue All Known Implementing Classes: AbstractCollection, AbstractList, AbstractQueue, AbstractSequentialList, AbstractSet, ArrayBlockingQueue, ArrayDeque, ArrayList, AttributeList, BatchUpdateException, BeanContextServicesSupport, BeanContextSupport, ConcurrentHashMap.That for any given batch, some of that batch may have been successfullyĬompleted, but not recorded as ACKed, when an abnormal termination occurs. Logstash processes events in batches, so it is possible If Logstash is abnormally terminated, any in-flight events will not have beenĪCKed and will be reprocessed by filters and outputs when Logstash is Upon restart, Logstash resumes processing theĮvents in the persistent queue as well as accepting new events from inputs.

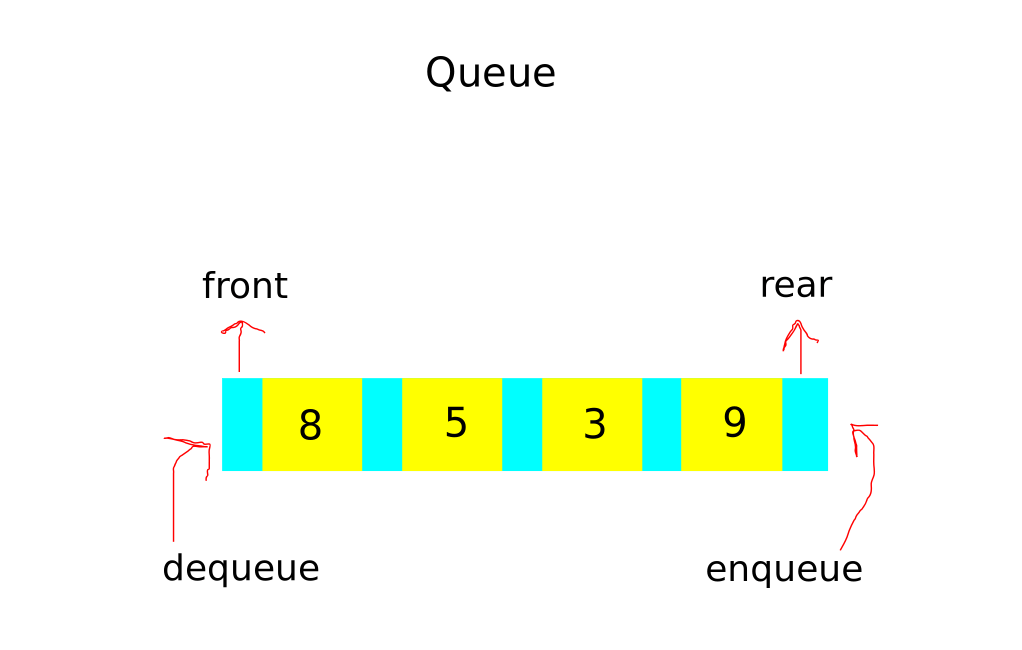

For example, if you have only one output,Įlasticsearch, an event is ACKed when the Elasticsearch output has successfullyĭuring a normal shutdown ( CTRL+C or SIGTERM), Logstash stops readingįrom the queue and finishes processing the in-flight events being processedīy the filters and outputs. What does acknowledged mean? This means the event has been handled by allĬonfigured filters and outputs. "ACKed") if, and only if, the event has been processed completely by the The queue keeps a record of events that have been processed by the pipeline.Īn event is recorded as processed (in this document, called "acknowledged" or When processing events from the queue, Logstash acknowledges events asĬompleted, within the queue, only after filters and outputs have completed. The write to the queue is successful, the input can send an acknowledgement to When an input has events ready to process, it writes them to the queue. The queue sits between the input and filter stages in the same Queue will be sized at the value of queue.max_bytes specified in Unless overridden in pipelines.yml or central management, each persistent

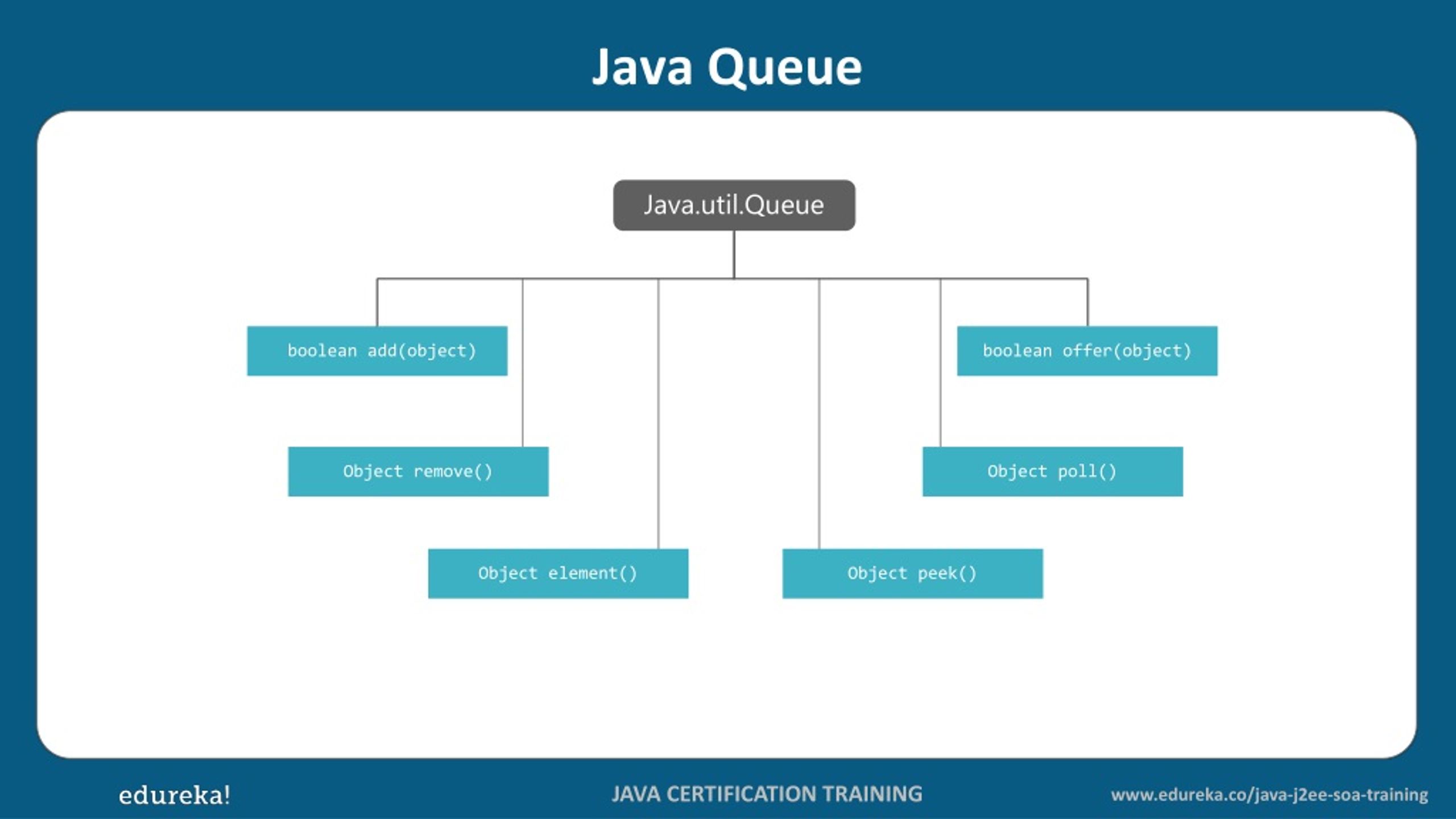

The total capacity of each queue in number of bytes. Users generally shouldn’t be changing this value. A Queue that additionally supports operations that wait for the queue to become non-empty when retrieving an element, and wait for space to become available in the queue when storing an element. We use this setting for internal testing. The maximum number of events not yet read by the pipeline worker. Therefore, you should avoid using this setting unless the queue, even when full, is relatively small and can be drained quickly. The amount of time it takes to drain the queue depends on the number of events that have accumulated in the queue. Specify true if you want Logstash to wait until the persistent queue is drained before shutting down. If you change the page capacity of an existing queue, the new size applies only to the new page. The default size of 64mb is a good value for most users, and changing this value is unlikely to have performance benefits. The queue data consists of append-only files called "pages." This value sets the maximum size of a queue page in bytes. By default, the files are stored in path.data/queue. Throws: IllegalArgumentException - if initialCapacity is less than 1. Parameters: initialCapacity - the initial capacity for this priority queue. The directory path where the data files will be stored. Creates a PriorityBlockingQueue with the specified initial capacity that orders its elements according to their natural ordering.

By default, persistent queues are disabled (default: queue.type: memory). Specify persisted to enable persistent queues.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed